Here is an inflammatory post from Reddit (I know, not very original):

The phrase “… Perl users were unable to write programs more accurately than those using a language designed by chance” is an actual quotation from a paper which seems to have been written with low standards in mind.

In the past, I wondered if Perl has to suck for people to be happy with their own choices. In this paper, Stefik et al. seem to have taken that argument to heart in pushing a pet language called Quorum.

The phrase “Perl users” in the quotation above is misleading. Stefik et al. did not recruit Perl users (in the commonly understood sense of the word) to write programs: “We compared novices that were pro- gramming for the first time using each of these languages …” Therefore, the quotation above ought to have been “Participants assigned to the Perl treatment …”

Their technique is intriguing:

Participants completed a total of six experimental tasks (see Table 1). In the first three, participants were allowed to reference and use the code samples shown in Figure 1. Seven minutes were allotted to complete each of the first three tasks. Once time was up, participants were given an answer key. This somewhat mimics the idea of finding a working example on the Internet then trying to adapt it for another purpose. For the final three tasks, use of the code samples was not allowed, no solutions were given, and ten minutes was allotted for each task.

Here is one of their sample Perl programs:

$x = &z(1, 100, 3);

sub z {

$a = $_[0];

$b = $_[1];

$c = $_[2];

$d = 0.0;

$e = 0.0;

for ($i = $a; $i <= $b; $i++) {

if ($i % $c == 0) {

$d = $d + 1;

}

else {

$e = $e + 1;

}

}

if ($d > $e) {

$d;

}

else {

$e;

}

}The program is described as “This code will count the number of values that are and are not divisible by c and lie between a and b. It then compares the number of values that are and are not divisible by c and makes the greater of them available to the user”.

That explanation is hard to understand, but it is equally bad for all languages, so we can assume it does not affect their results.

Six participants were assigned to complete tasks using the two fake languages, and the one real language, Perl.

Now, here is where we run into a problem with engineer and programmer types dabbling in Stats and Econ. Because the arithmetic is rather simple and they know how to use a computer, most such people automatically assume they are doing the right thing. It’s just a formula, plug in some numbers, get some numbers out, check them against a threshold, and you are done.

It is definitely true that you can get good grades in a lot of Stats classes because the world is full of such students and disaffected teachers who have long ago abandoned any desire to communicate the fine points of statistical analysis. One only needs to look at well known examples such as the Hockey Stick temperature graph, connection between portion sizes and obesity, neck thickness and ED etc etc to realize how dominated the world is with people who are really good at plugging numbers into formulae until desired results are obtained.

Six people trying their hands at each of the languages is rather few. The comparisons are made across participants which means the tool is not the only thing that varies across treatments.

Their between-subjects test pitting one language against the other requires “the assumption that the difference scores of paired levels of the repeated measures factor have equal population variance.”. They test this using Mauchly’s test which has really low power in small samples. That is, it is the kind of test that will fail to tell you violated the assumptions precisely in this kind of a situation.

With only 18 participants, the authors could have provided a full list of scores by task and language, instead of using measures such as average and standard deviation that are highly sensitive to high and low values.

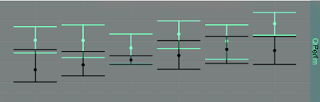

Their only display of data, box-plot stacked on top of one another does not convey information in an easily comprehensible manner. Giving the reader a standard deviation calculated from six observations in an extremely compact range [0,1] is nonsense. For example, the average score of participants in the Perl treatment is 0.432 whereas the average score for participants in the Quorum group is 0.628. The sample standard deviation for the six participants in the Quorum group is 0.198 which means the average score for the Perl group is within one sample standard deviation of the Quorum group. How much of an overlap is there between these two groups?

I manually laid the the Quorum panel on the Perl panel in Gimp to check:

Clearly, the average scores of those in the Quorum group are higher for each task than the scores in the Perl task. There is, however, also quite a bit of overlap in the box-plots by task. But, you only need box-plots when there a lot of points. Why not just plot the each individual’s score? After all, there would be only six points per task per language.

When you have that few observations, just a good, descriptive statement of results showing as much data as possible is much more preferable to contortions using statistical tests. If your figure does not show the difference, then I do not much care for your test results.

Finally, I have a feeling using the return statement in the Perl example code might have raised scores a bit. I cannot think of a reason one would not use the return statement in a task like this unless it is a contorted attempt to make Perl look like gibberish.

Regardless of all that, the statement:

Results showed … Perl users were unable to write programs more accurately than those using a language designed with syntax chosen randomly from the ASCII table.

is not supported by a study that looked at people who had never used Perl before.